This post explains how to host services on Google Compute Engine, parts of this are applicable to regular (non-GKE) Kubernetes clusters as well. This post will cover:

- allowing multiple services to be deployed in different namespaces

- allowing multiple (sub)domains to be deployed

- making a service available through a public and fixed IP accessible using a host name

- making it accessible over HTTPS using a certificate

- HTTP redirect to HTTPS

The treatment of the subject is a bit high level with focus on what resources and fields are relevant and on the way different Kubernetes resources relate to each other. Also some snippets of YAML configuration are given. This should be sufficient to ‘roll your own’.

Deploying services in different namespaces

The basic architecture we start off with is as follows:

In this basic setup, there is one Ingress object in a certain namespace and each Service that it exposes is in a separate namespace together with its deployment. There is a limitation in Ingress that does not allow it to be used when Services are in a different namespace than the Ingress resource. This limitation is expected to be lifted in a future version of Kubernetes using the Gateway API.

One workaround is to deploy a separate Ingress in every Service namespace. However, this leads to other problems since than separate IPs must be used for each service.

A better solution is to use a separate ingress namespace in which to deploy a reverse proxy (in the example httpd is used, but nginx is of course also possible) and from there to setup a reverse proxy using the cluster local DNS name for the service. Given that a service x is deployed in namespace y, it can be accessed using the DNS name x.y.svc.cluster.local.

On apache, this can be a configuration such as this:

ProxyPass /hello http://X.Y.svc.cluster.local/hello

ProxyPassReverse /hello http://X.Y.svc.cluster.local/jenkins

The architecture for two services resp. x in namespace y and x2 in namespace y2 then becomes:

This architecture is quite flexible. It allows SSL termination on a using a single Ingress rule and provides complete flexibility in allocating services to namespaces.

The apache deployment is configured using a config map that contains the httpd.conf file in a ConfigMap resource. The ConfigMap is mounted into the pod as follows:

apiVersion: apps/v1

kind: Deployment

metadata:

name: httpd

namespace: exposure

...

spec:

...

template:

...

spec:

containers:

- name: httpd

image: httpd:2.4

...

volumeMounts:

- name: httpd-conf

mountPath: /usr/local/apache2/conf/httpd.conf

subPath: httpd.conf

volumes:

- name: httpd-conf

configMap:

name: httpd-config

Note the use of the subPath configuration in the deployment spec to mount only a single file from the httpd-conf volume, which keeps all other files in the /usr/local/apache2/conf/ directory intact. The config map can be created from an existing httpd.conf file as follows:

kubectl create configmap --namespace exposure \ httpd-config --from-file=httpd.conf

The httpd.conf used is an adaptation of the file that comes standard with the container. Since we are terminating SSL using Ingress, the httpd config is without any SSL configuration or reference to certificate files.

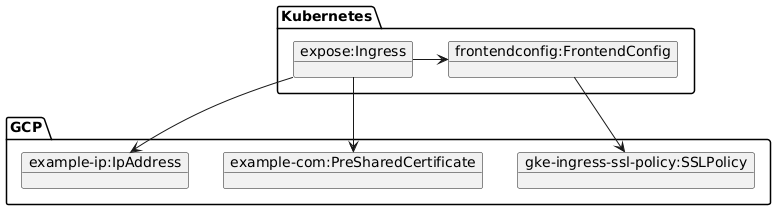

Exposing the service externally

To expose the httpd service externally (and thereby all services for which it is a reverse proxy), we need to introduce the FrontendConfig resource. The FrontendConfig is a custom resource that is only available on GKE as far as I know and this is used to configure Ingress features.The SSL Policy is a GCP object that defines SSL features for the HTTPS connection.

Using this setup, we can configure:

- the certificate used

- HTTP to HTTPS redirect

- the external IP address

It is most easy to look at the Ingress configuration:

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: httpd-ingress

annotations:

#kubernetes.io/ingress.allow-http: "false"

kubernetes.io/ingress.global-static-ip-name: example-ip

ingress.gcp.kubernetes.io/pre-shared-cert: example-com

networking.gke.io/v1beta1.FrontendConfig: frontendconfig

namespace: exposure

spec:

# tls config not needed since a pre-shared certificate

# is used.

#tls:

# - hosts:

# - example.com

# secretName: example-com-certificates

rules:

- host: example.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: httpd

port:

number: 80

First of all, we see that all traffic is forwarded to httpd, which is ok since httpd distributes the traffic over backend services and as far as Ingress just routes to httpd. Next, we see several other features.

The annotation kubernetes.io/ingress.allow-http can be used to disable http but this is commented out since we allow http and will do a redirect to https instead using the FrontendConfig.

Next, the annotation kubernetes.io/ingress.global-static-ip-name defines the name of a previously created static IP address in GCP which is the static IP address under which the services are exposed.

The annotation ingress.gcp.kubernetes.io/pre-shared-cert defines the name of the pre-shared certificate that is used.

A pre-shared certificate is a certificate registered at GCP. Based on the domain name, GCP automatically chooses the certificate. This means that explicit configuration of the certificate using the tls section is not needed and this part is therefore commented out. It also allows the same certificate to be used by multiple GKE clusters and is thus lower maintenance than using the alternative setup by defining a secret per GKE cluster for the certificates.

To create the pre-shared certificate, concatenate the crt files of the certificate containing the certificate and certificate chain and put it into a single file as follows:

cat example.com.crt example.com.2022.crt > full.crt

Next, create the pre-shared certificate from this file and the private key file:

gcloud compute ssl-certificates create example-com \ --project example-project \ --global \ --certificate=full.crt \ --private-key=example_2022.key

The final part is the networking.gke.io/v1beta1.FrontendConfig annotation which links the ingress resource to the frontend config.

The FrontendConfig, finally, is as follows:

apiVersion: networking.gke.io/v1beta1

kind: FrontendConfig

metadata:

name: frontendconfig

namespace: exposure

spec:

redirectToHttps:

enabled: true

sslPolicy: gke-ingress-ssl-policy

In this configuration we see that the redirect from http to https is configured. Also, there is reference to an SSL policy which defines SSL features. For instance:

gcloud compute ssl-policies create gke-ingress-ssl-policy \

--profile MODERN \

--min-tls-version 1.2